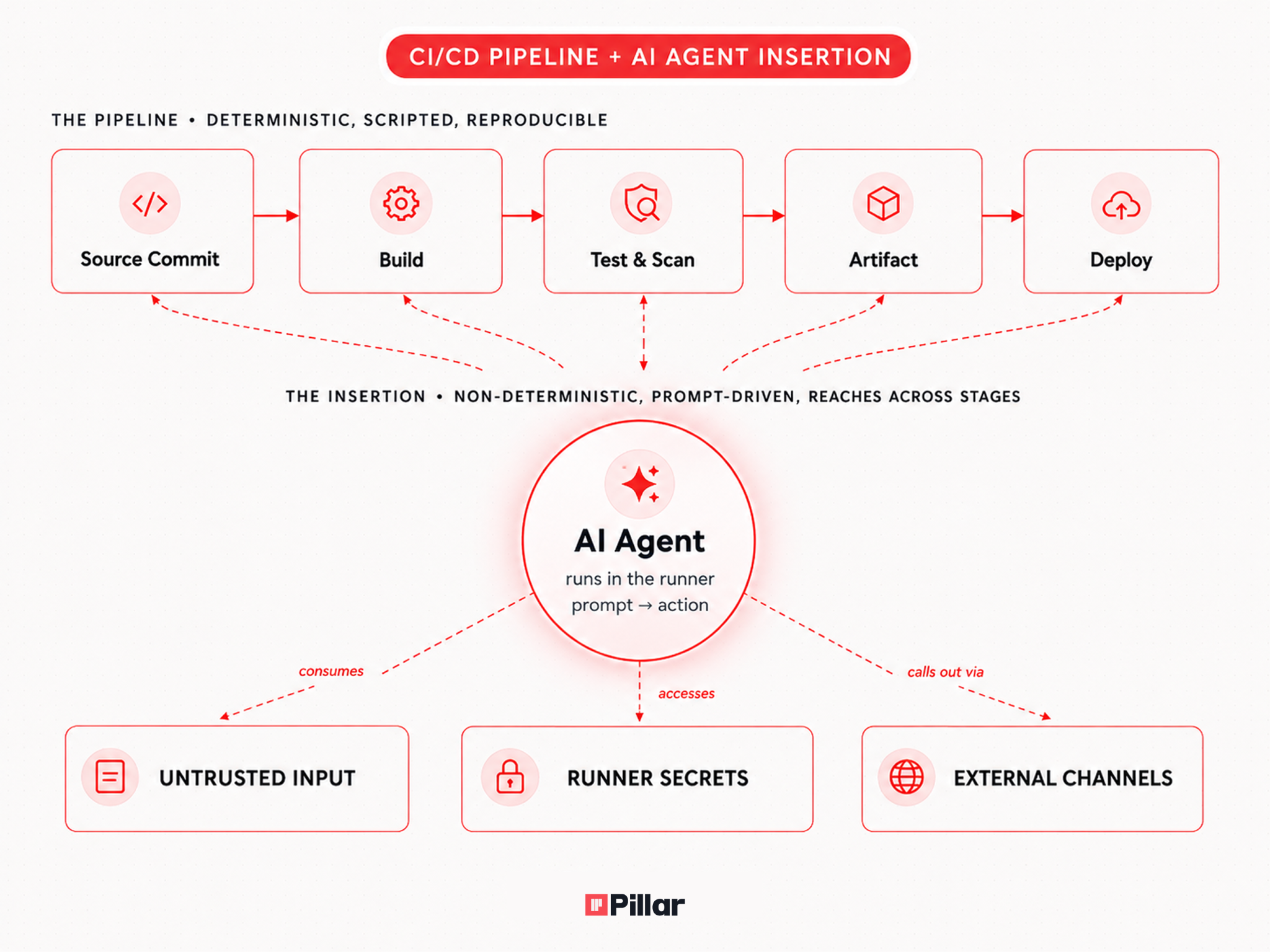

For two decades CI/CD was the deterministic part of the supply chain. You committed code, scripted steps ran, artifacts came out the other side. Pipelines failed loudly when something unexpected happened, because nothing unexpected was supposed to happen.

That assumption is dying.

A new class of integrations now embeds autonomous AI coding agents directly inside the pipeline. We covered the platform response in our launch post for Pillar for Agentic CI/CD. The question we want to size carefully here is what risk class actually arrived with these agents, and where the existing security stack quietly fails to see it.

The Risks Security Leaders Should be Tracking

1. Excessive agency and a blast radius nobody sized

Give an agent shell access and write permissions on the repo, and a single successful manipulation hands the attacker the same authority as the agent. The threat model is closer to an insider threat than to a code review tool, with one important difference: the "insider" gets steered by anyone whose text reaches the prompt.

Most teams adopting these integrations never sized that blast radius. They treated the agent like a smarter linter and granted it permissions a linter would never have asked for. Write access to source, the ability to push commits, a shell on the build host. The Google supply-chain compromise Pillar Security researcher Dan published is what the worst-case version of that looks like in practice: a public GitHub issue, a triage agent, and a path all the way to arbitrary commits on main of a 103k-star repo.

2. The first CI/CD attack class that doesn't require committing code

Prompt injection here is a code execution primitive, because the model is wired to a shell and a git remote. A PR title, an issue comment, a code comment, a markdown file in the repo, the body of a bug report, anything the agent reads is a delivery vector for promptware.

What makes the attack class new is the entry cost. An attacker only needs to write English (or any other language the model understands) somewhere the agent will eventually read it. The Google `issues: opened` trigger we exploited on gemini-cli had no author gate, so a fresh GitHub account and a well-crafted issue body were enough to fire the workflow.

3. Credentials at the agent's fingertips

Pipelines are where secrets live. Cloud deployment keys, package registry tokens, signing credentials, database passwords, third-party API keys, all of them get injected into runner environments at execution time.

Every agent integration we have catalogued operates inside that same environment. A non-deterministic agent and sensitive credentials in the same process is the configuration we have spent years training developers to avoid in production code. CI now ships it as a feature.

The exfiltration path doesn't even require a sophisticated exploit. An agent that can run a shell can run curl. An agent that can write code can write code that prints an environment variable. An agent that has been prompt-injected can do either on instruction. In the Google case, the workflow author explicitly removed GITHUB_TOKEN from the agent's environment, and the workflow still got compromised because actions/checkout had persisted credentials to .git/config on disk. Removing the token from the environment doesn't matter if the filesystem still holds it.

4. The runtime supply chain

Most agentic integrations aren't pinned, vendored dependencies. The pipeline pulls them at job time. Sometimes that means a package manager. Sometimes a curl-to-bash one-liner. Sometimes a remote include from a URL that resolves to whatever the upstream maintainer pushed last.

So the trust boundary on a CI run now extends to every transitive maintainer of every agent the pipeline pulls. Compromise the install script, compromise the build. The same supply-chain failure modes that produced the most damaging breaches of the last five years apply here, with one amplifier: the dependency you just installed is allowed to read your code, run commands, and reach the network. A separate exploit isn't required to do harm. Normal operation is the harm.

5. External context expansion via Tools

The newest accelerant is the bridges that let agents pull in context from outside the repo and interact externally. CLI tools, documentation, project trackers, customer support systems, knowledge bases, all of it.

Each new context source is a new place an attacker can plant instructions that the agent will execute on your runner. The threat model is no longer "review the diff." It's "review every system the agent can read from."

A Governance Shift for Security Leaders

Tooling matters, and our launch post covers the controls we ship for this. But the strategic move is treating agentic CI/CD as identity-and-access work rather than a productivity feature. A few principles worth adopting:

- Get runtime visibility and protection on the runner. The four principles below are configuration moves, and configuration is where you should start. But an agent's effective authority is decided after the workflow file is read, when the model picks which tool to call or credential to reach for. Static analysis can tell you what an agent might do. Only runtime telemetry from the runner itself tells you what it actually did, and only a runtime control can stop it mid-execution. Pillar runs the same endpoint agent that protects coding agents on developer workstations directly on CI/CD runners for exactly this reason.

- Treat every agent as a privileged identity. Give it a name, an owner, a documented purpose, and a scope. If you wouldn't grant a contractor the same permissions, don't grant them to a tool that takes instructions from pull request comments.

- Constrain trigger sources. The most dangerous configuration is an agent triggered by inputs from outside the trust boundary. Those triggers should require explicit approval gates, not autonomous execution.

- Minimize secret co-location. An agent that reviews code does not need deployment credentials. Split runners and split scopes so the most permissive agents see the fewest secrets.

- Pin and vendor your agents. Treat them like any other production dependency. Unpinned references and curl-bash installers aren't acceptable in code that ships to customers. They aren't acceptable in code that ships your code either.

What this Adds up to

The risk isn't that AI agents are uniquely dangerous. The risk is that CI/CD is uniquely powerful, and we have spent twenty years hardening it against deterministic threats while cheerfully handing the keys to non-deterministic ones.

Agentic CI/CD is going to keep expanding. New agents will keep arriving faster than governance frameworks can absorb them. The teams that handle this well will recognize what just happened: their pipeline grew a workforce.

Subscribe and get the latest security updates

Back to blog

%20(1).png)

%20(1).png)

%20(1).webp)

.webp)